Amazon Web Services is a major cloud services platform used by companies around the globe. Its cost-effectiveness and high agility has helped brands across all categories, verticals, and sizes scale their services quickly and efficiently.

With many organizations now leveraging AWS resources to develop, build, and run business-critical applications in the cloud, it is important to track and monitor the performance of these services in real time to avoid unexpected issues.

With ManageEngine Applications Manager, you can stay on top of your AWS game by getting notified about issues and enforce remedial action to resolve them instantly.

Let’s take a look at the key AWS performance metrics to monitor for effective maintenance and management of your AWS resources.

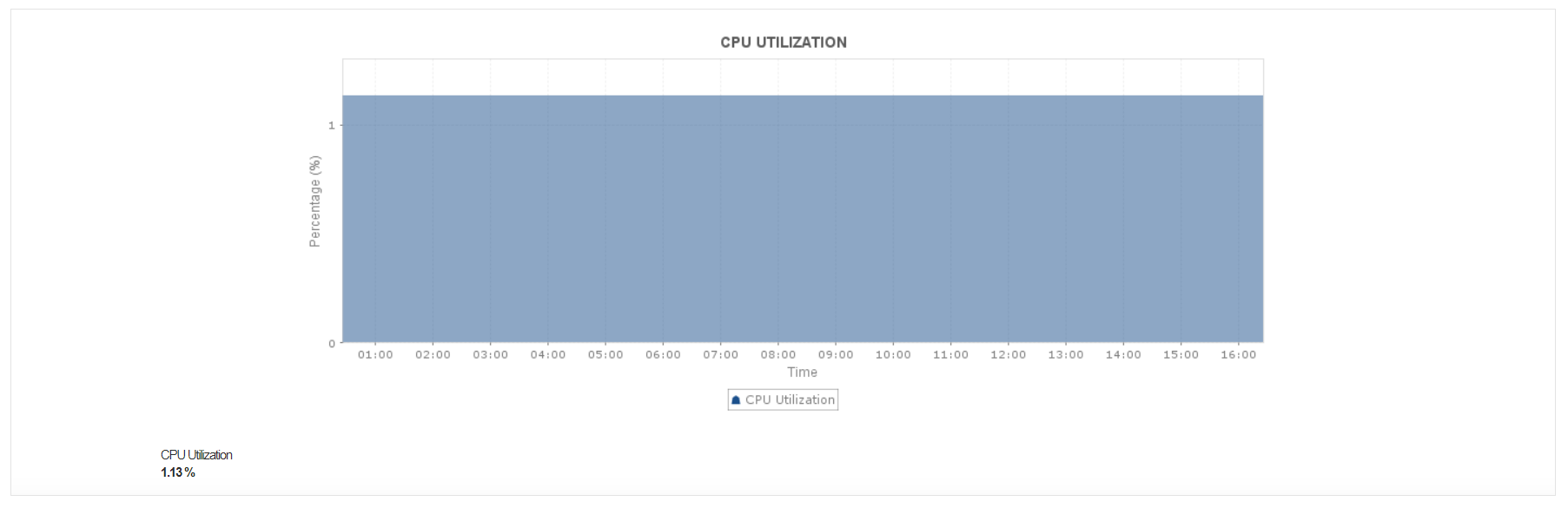

CPU usage

The cloud evolution has facilitated enormous change in the way we build applications. Transferring workloads to virtual machines has expanded monitoring possibilities by enabling easier and quicker resource management. If you’re using AWS Compute resources to run your workloads (EC2 or ECS), CPU usage is a key performance indicator to determine application performance.

-

With respect to EC2 instances, monitoring CPU usage can shed light on whether these are being over/underutilized.

-

If you are using ECS, i.e. Docker-enabled applications packaged as containers across a cluster of EC2 instances, watching CPU usage at the container level can help pinpoint the applications that consume a significant amount of your resources.

Applications Manager’s AWS monitoring capabilities helps you track the CPU usage of ECS and EC2 instances. You can even configure an alarm to get notified when the value crosses a set threshold.

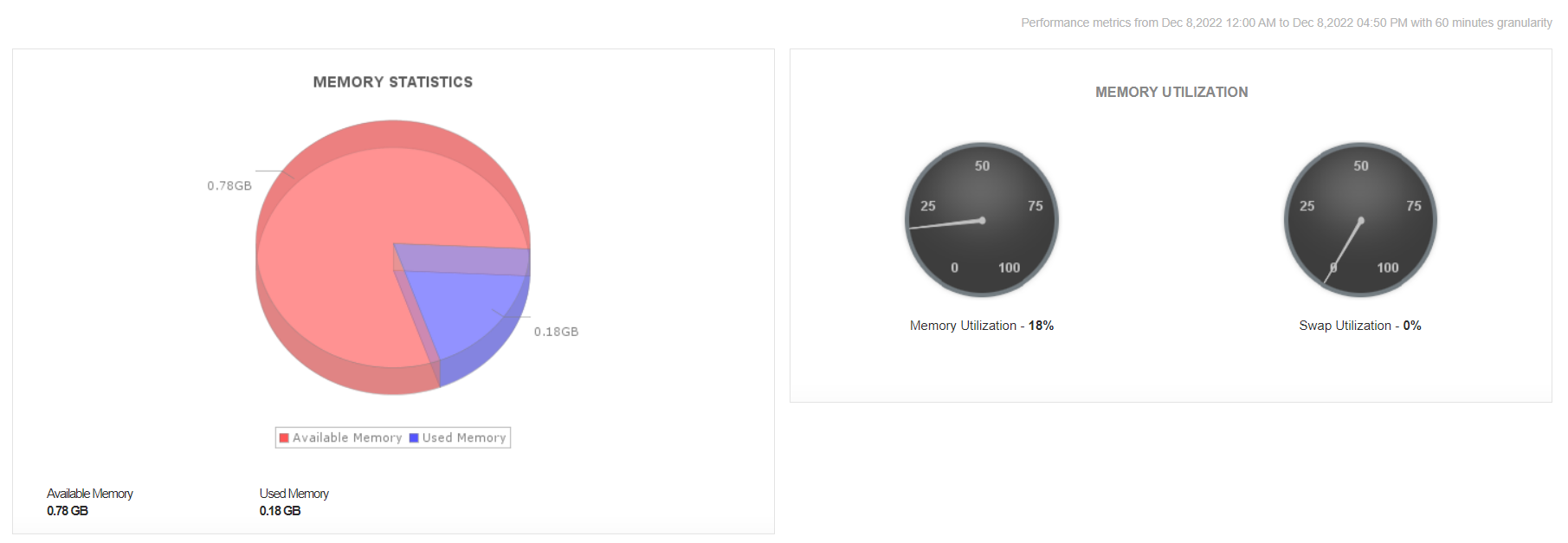

Memory usage

Efficient memory management ensures optimum performance of applications. Keeping an eye on memory usage helps identify and isolate performance hiccups in the AWS infrastructure.

-

In the ECS infrastructure, it is crucial to monitor container-level memory metrics to ensure requisite scaling. Setting appropriate hard memory limits prevents tasks from running out of memory, thereby avoiding unnecessary memory leaks.

-

With respect to EKS clusters, monitoring node-level and pod-level memory usage is helpful in discovering when to scale your cluster and if the clusters are able to run workloads effectively without pods getting evicted or important processes being terminated.

With Applications Manager’s AWS monitoring tool, you can monitor memory utilization of your ECS and EKS instances along with other related performance metrics, all from a single console. You can even analyze trend reports and use ML-based reporting capabilities to predict usage and plan capacity.

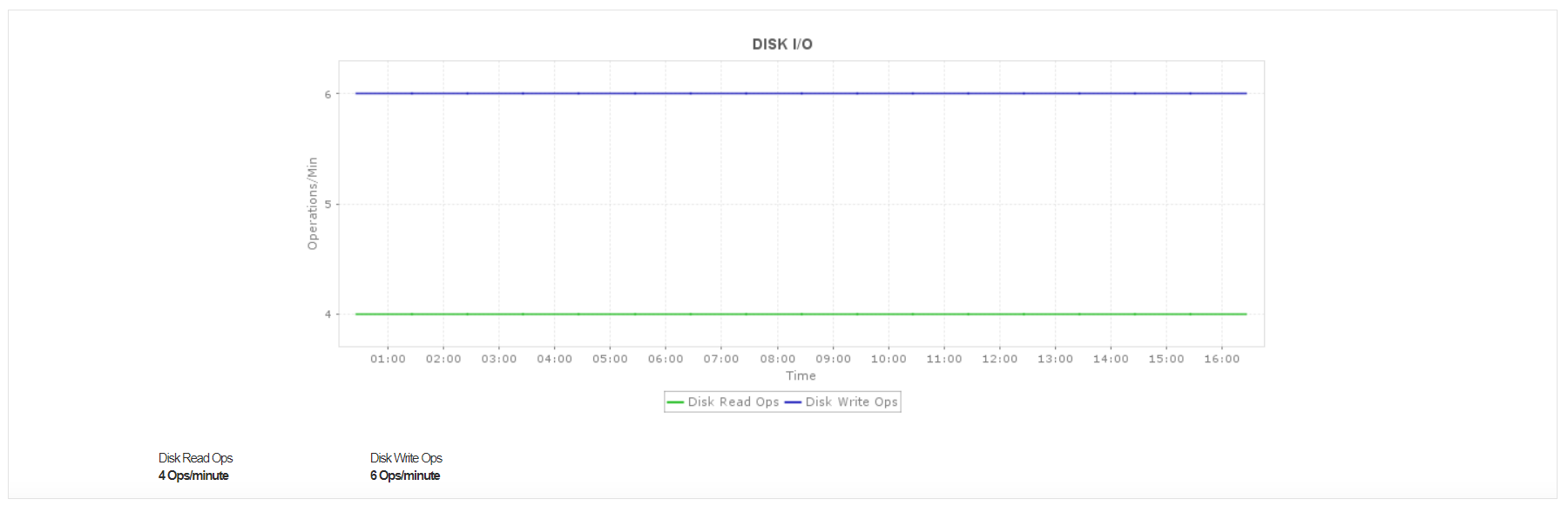

Disk I/O

Disk I/O metrics help you gauge the volume of bytes being read from and written into your AWS instances. Tracking these AWS metrics can help pinpoint and resolve application-level problems.

For instance, if you observe that your disks are constantly recording a high amount of read/write operations, you can reduce the load on them by adding a caching mechanism. This can prevent disk queuing, thereby reducing the possibility of sudden performance degradation of your mission-critical applications.

Requests

The Request Count metric indicates the total number of requests made from an instance in your AWS environment.

Monitoring this metric helps you identify when an abnormal number of requests are being received, indicating an underlying issue in your AWS instances such as faulty configuration or DNS-related issues. (If you find that there is a constant increase in the number of requests, you may have to regulate the number of instances that your load balancer is backed with.)

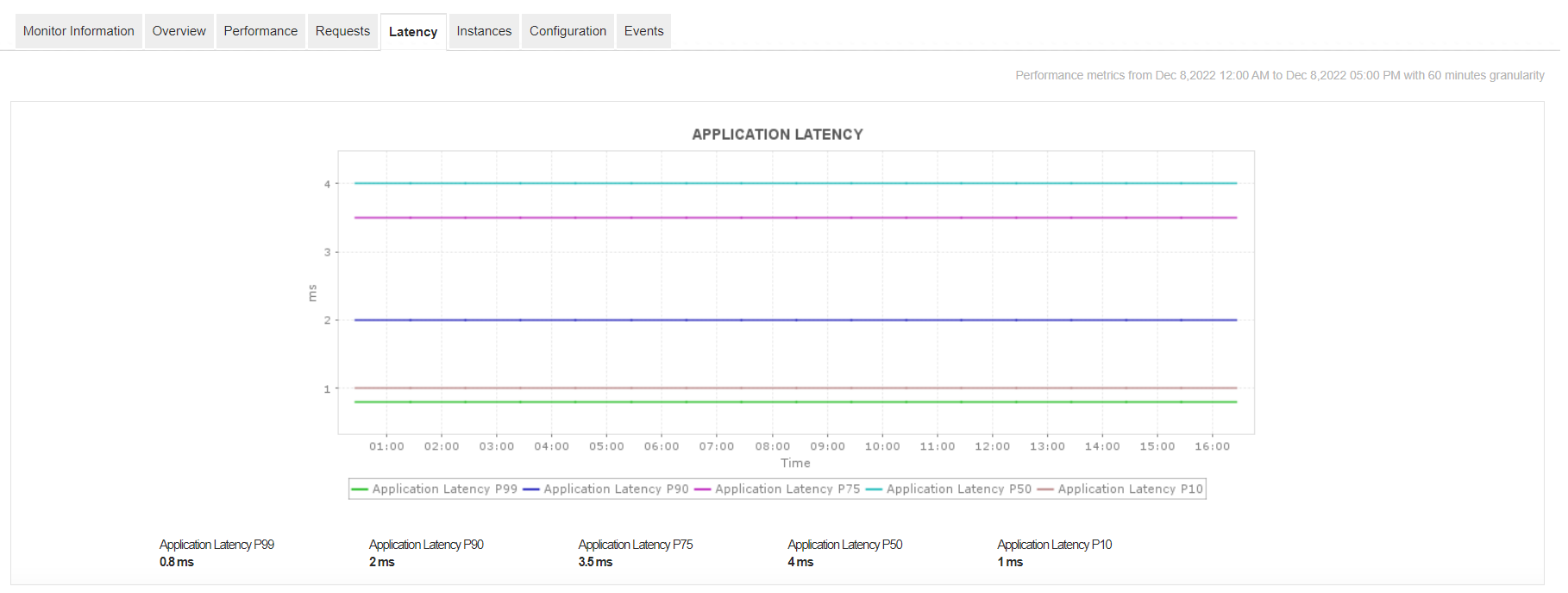

Latency

Latency in AWS terminology refers to the time that an application takes to respond to a user request. It is measured in multiple ways depending on real-life use cases; it can be based on application response time or EC2 metadata/EBS volume service response time. Latency is usually caused by poor network connections, bad configuration of backend hosts, or complex dependencies with web servers.

-

Monitoring transaction latencies and tuning underlying database queries can significantly improve application performance.

-

Tracking disk latencies can uncover resource constraints that contribute to deteriorating database performances.

Applications Manager’s AWS monitoring capabilities let you monitor and discover AWS resource changes in real time. You can leverage auto-scaling and load distribution mechanisms of AWS to prevent workloads from performing poorly and automatically provision resources in your virtual environments to enhance operational efficiency! To top it off, you can start, stop, or restart your AWS virtual machines without logging in to your AWS consoles.

Applications Manager can monitor 150+ kinds of applications spanning on-premise technologies, virtualization, containers, and cloud domains—all from a single console—so you can gain full visibility into your infrastructure.

If you’re new to Applications Manager, learn more and explore by downloading a 30-day, free trial or take a guided tour by scheduling a personalized demo.